You have now designed a nice web site which you have uploaded on a hosted server and you think you are ready to go. Not quite right. There are a few more steps required which will guarantee a better visibility of your web site.

I am not a search engine optimization (SEO) professional and qualified people are writing books about SEO which can be a very complex subject. But I have been confronted to the issue, I have searched for answers on the Internet and I publish here my findings. If you apply the 80-20 Pareto rule, you can get decent results in three steps that you may want to re-iterate periodically:

- Make your web site is right for search engine crawling robots;

- Submit your web site to search engines;

- Get referral links.

I may have done something right since I have managed to get our web site on the first page on MSN and Yahoo. I know from experience that the only way to get a high ranking on Google is to obtain referral links.

1) Make your web site right

First, you need to make sure that search engine robots can read your web site. You also need to assist search engine robots by telling them which pages to index and how to categorize your web site.

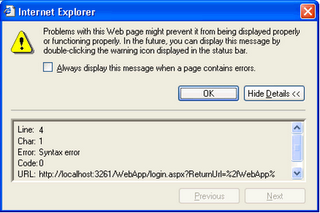

Make sure your HTML is compliant

A web design environment like Dreamweaver will warn you of any non-compliance issue. You can also use a text browser like Lynx to check that your web site displays properly to search engines. Note that search engine robots read sites in a similar way. You can also check your pages at http://www.smart-it-consulting.com/internet/google/googlebot-spoofer/index.htm.

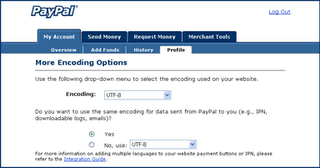

Important also is to set the page encoding and content language. I personally recommend using utf-8 encoding even on English pages. Contrary to what people often think, utf-8 is not double byte and the size of an English page is the same in utf-8 and iso-8859-1.

Describe your web site properly

Title, meta description, meta keywords and images alternate text contribute to help search engines categorize your web site properly.

Getting your keywords right is a difficult exercise:

- you should limit yourself to 10 to 20 keywords;

- the more targeted your keywords the more efficient they are to help find you, but you do not want to be too narrow;

- As far as Google, Yahoo and MSN are concerned, you do not need both the singular and plural of a keyword and the order of words in a keyword seems to make no difference.

The following tools will help your build your keywords meta tag:

Add an address and a privacy policy

An address and a privacy policy will not give you a high ranking, but they may prevent you from getting one because you won’t look like a serious company. To add a privacy policy for your site, follow the steps at http://www.the-dma.org/privacy/creating.shtml#form.

Add content rating

To add content rating for your site, follow the steps at http://www.icra.org/label/

Create sitemaps

Sitemaps will tell search engines which pages to look for. To create sitemaps, follow the steps at http://www.xml-sitemaps.com/. You can get more information at http://www.sitemaps.org/.

You are encouraged to submit your sitemaps to search engines, in particular:

Create a robot.txt file

You create a robots.txt file essentially to tell which parts of your site not to index. More information about robots.txt files is available at http://www.robotstxt.org/wc/robots.html.

Add web site monitoring and statistics

If your web site is too often inaccessible, you will be downgraded by search engines. Accordingly, you need to monitor your web site and keep your ISP honest. The following monitoring services range from free to high-end:

Most hosting packages come with AWStats and Webalizer which give poor statistics. There is obviously http://www.webtrends.com/ but I can only recommend the free http://www.google.com/analytics/.

2) Submit your web site to search engines

Most ISPs offer search engine submission standard with hosting packages. Unless you are very lazy, do not pay for a submission service and do not buy submission software. Submitting your URLs to Google, MSN and Yahoo only takes 10 minutes and covers for more than 80% of the search engine traffic.

This is especially true if you already own a web site that is referenced. In this case, you just need a hyperlink from the referenced web site to the new web site and the search engine crawlers will automatically index your new site.

3) Get referral links

Getting referral links is the only thing that guarantees high rankings. Basically, the more a page is referenced on the web, the best the value of this page to the Internet community, so the highest ranking it gets in searches. Additionally, a reference on the http://www.bbc.co.uk/ web site is worth more than a reference on http://www.acme.tf/.

So you need to work on getting other web sites to reference your own web site. This is an everyday job that only your organization can successfully perform. You should get a decent ranking on Google (top 3 pages) with about 100 references but obviously some topics are more crowded than others. The earlier you start the better. Good luck!

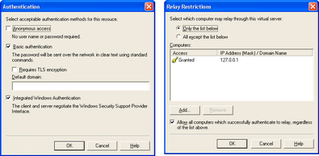

You can certainly be more restrictive depending on your requirements, once you got teh above configuration to work properly.

You can certainly be more restrictive depending on your requirements, once you got teh above configuration to work properly.

Click the More Options button.

Click the More Options button.